Manually writing OPA Rego policies is a significant bottleneck for many platform teams, creating a ‘Rego tax’ that can slow down development and introduce risk. This article introduces a new approach: a Dynamic Kubernetes Policy Generator that uses a large language model (LLM) to analyze live clusters and automatically generate context-aware OPA Gatekeeper policies.

We’ve built a Dynamic Kubernetes Policy Generator designed to bridge the gap between live cluster state and security governance. This solution leverages an AI-powered CLI assistant (utilizing Claude Code‘s agentic capabilities) and the Model Context Protocol (MCP) to automate the detection and remediation of cluster violations. This tool moves beyond static templates to analyze live infrastructure and generate context-aware OPA Gatekeeper policies in minutes. By transforming manual governance into an automated, data-driven service, platform teams can finally move at the speed of development without sacrificing absolute security.

Note: Red Hat’s Emerging Technologies blog includes posts that discuss technologies that are under active development in upstream open source communities and at Red Hat. We believe in sharing early and often the things we’re working on, but we want to note that unless otherwise stated the technologies and how-tos shared here aren’t part of supported products, nor promised to be in the future.

Key capabilities:

- Live Infrastructure Discovery: Uses the Model Context Protocol (MCP) to analyze real-time cluster workloads and configurations with enhanced security.

- Pattern-Based Policy Authoring: Uses a library of pre-validated Rego samples to guide the AI, with generated policies following correct syntactical formats and organizational standards.

- Context-Aware Policy Logic: Generates OPA Gatekeeper policies tailored to specific namespace and resource contexts rather than using generic, one-size-fits-all templates.

- Bi-Directional Governance: Bridges the gap between high-level security intent and low-level cluster enforcement using an AI-driven orchestrator.

Key takeaways:

- Automated Governance: Eliminates the “Rego Tax” by using AI to transform live cluster analysis into production-ready OPA Gatekeeper policies.

- High-Fidelity Integration: Leverages the Model Context Protocol (MCP) to grant AI assistants direct, structured access to Kubernetes APIs for accurate, real-world data collection.

- Safety-First Deployment: Combines 30+ human-curated Red Hat best practices with a mandatory “dryrun-first” workflow to drive security without disrupting live services.

- Continuous Compliance: Enables real-time drift detection and “Shift-Left” security by integrating AI-generated guardrails directly into CI/CD pipelines.

- Force Multiplier for Red Hat ACS: This workflow acts as a front-end engine that feeds hardened, preventative policies into enterprise platforms like Red Hat Advanced Cluster Security for fleet-wide enforcement.

Architecture

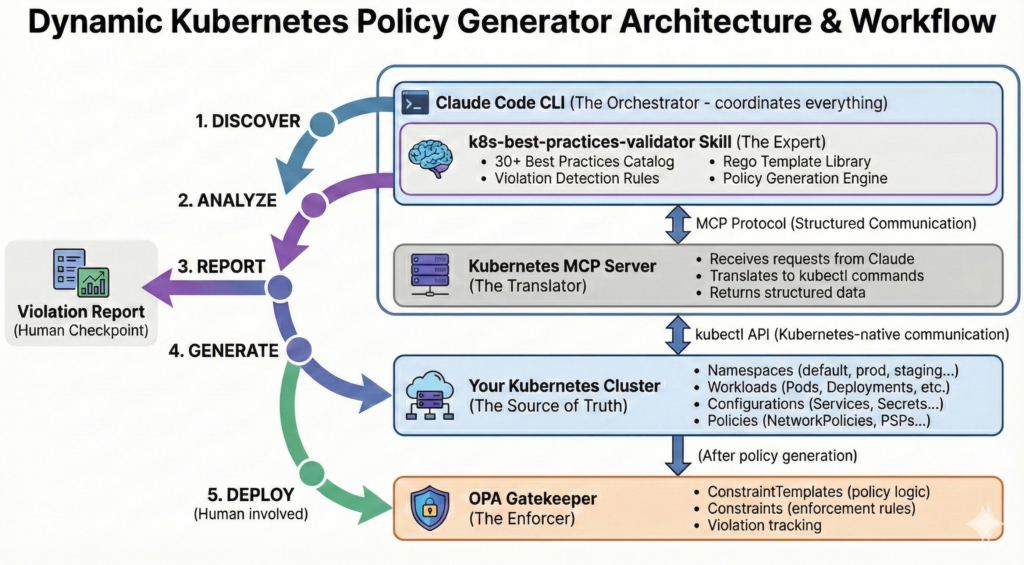

Understanding how the Dynamic Policy Generator works requires looking at two things: how the components communicate and the logical journey from a messy cluster to a hardened environment.

System overview

As we established, the system’s core is the structured communication between the AI orchestrator and the Kubernetes MCP Server bridge. Working in tandem with OPA Gatekeeper, these components transform high-level security intent into enforceable cluster reality.

Unlike traditional AI interactions where you might copy-paste YAML into a chat box, this architecture uses the Model Context Protocol (MCP) to provide the AI assistant with direct, structured access to your cluster API. This creates a high-fidelity bridge that translates the orchestrator’s intents into precise kubectl actions and returns machine-readable data for analysis.

- Claude Skills (The Orchestrator): The orchestrator runs a specialized k8s-best-practices-validator skill that encapsulates deep domain expertise. These skills act as automated workflow engines, providing specialized instructions in Markdown to make the large language model follow deterministic steps. This helps keep the AI from just “guessing,” but instead captures complex expertise to identify violations based on curated standards.

- The Kubernetes MCP Server (The Bridge): This server acts as the security-forward interface layer. It moves beyond static text by allowing the assistant to dynamically “query and reason” across live resources. By returning structured data instead of raw strings, it enables the orchestrator to verify deep fields—like securityContext or resourceLimits—with the precision required for production-grade governance. A high-fidelity bridge that translates the AI orchestrator’s intents into precise, schema-validated kubectl actions.

- OPA Gatekeeper: A powerful, industry-standard policy engine designed specifically for cloud-native environments, enforcing consistent policies across technology stacks through its declarative language, Rego. Despite its power, Rego’s complexity remains a significant hurdle for many teams. Our system democratizes this learning curve by automatically generating tested, reliable OPA Gatekeeper policies based on actual violations detected in a live cluster. These generated policies find their home in OPA Gatekeeper, which lives inside the cluster as a native admission controller to enforce new standards in real-time.

By integrating these components, we replace the “manual copy-paste” loop with a seamless, Intent-as-Governance workflow where the AI understands the cluster’s state as clearly as a human engineer.

The workflow: From discovery to enforcement

We’ve broken the process down into five distinct phases. While the AI does the heavy lifting, the human remains the final authority, specifically at the reporting and deployment stages.

Phase 1: Discover

The process begins with a deep crawl. Claude uses the MCP server to map the cluster landscape. It identifies namespaces (filtering out system-critical ones like kube-system), lists active workloads (Pods, Deployments, StatefulSets), and captures their current configurations. Also there is a user upper hand in choosing the namespaces.

Phase 2: Analyze

Once the data is gathered, the Best Practices Validator skill goes to work. It compares the live YAML against a library of 30+ Red Hat-hardened standards. It looks for specific “anti-patterns,” such as:

- Containers running as root.

- Missing CPU/Memory limits.

- Privilege escalation enabled.

- Images using the :latest tag.

Phase 3: Report (the human checkpoint)

Instead of silently fixing things, the tool generates a Violation Report. This categorized summary shows you exactly where your cluster stands.

Why this matters: It gives the Platform Engineer a “hit list” of risks. You can see, for example, that while your prod namespace is clean, your dev namespace has 40 containers running without resource limits.

Phase 4: Generate

This is the Rego generation phase. For every violation you choose to address, Claude selects the appropriate template from its library and populates it with your cluster’s specific context. It generates two files:

- ConstraintTemplate: The reusable Rego logic.

- Constraint: The specific implementation targeting your namespaces and resource kinds.

Phase 5: Deploy (the safety first approach)

Finally, the policies are saved to your local directory. We prioritize a Dryrun-First philosophy. By default, the generated policies are set to enforcementAction: dryrun. This allows you to apply them to the cluster and monitor the “would-be” blocks in the Gatekeeper logs before you ever risk breaking a developer’s deployment.

Inside the Rego generation

At first glance, having a (LLM dynamically write Rego code sounds like a dream. After all, LLMs are capable of generating code across numerous languages and can iteratively refine their actions through multiple turns until they reach an accurate response. However, when it comes to security and compliance policies that gate production deployments, being “capable” is simply not enough. For production-grade clusters, we require logic that is correct, tested, and reliable, leaving absolutely no room for hallucinations.

Template-based generation vs. hallucination prevention

The core challenge with LLMs is the “Hallucination Problem”. Despite their sophistication, they can generate plausible-looking but incorrect or insecure code. If you ask an LLM to write Rego from scratch, you risk:

- Logical Flaws: Syntactically correct rules that fail to catch actual violations.

- Missing Edge Cases: Forgetting to scan initContainers or ephemeralContainers.

- Path Errors: Using incorrect field paths in complex Kubernetes resource structures.

- Subtle Vulnerabilities: Security gaps introduced through logic errors or inefficient Rego idioms.

That is they confidently generate wrong answers. Our Solution: Curated Templates with AI Orchestration Instead of raw generation, we use a hybrid approach. The k8s-best-practices-validator skill provides a library of pre-written Rego templates, each corresponding to a specific Red Hat best practice extracted from here. These templates are:

- Human-Curated: Written and peer-reviewed.

- Quality Assured: Verified against the OPA testing framework with dedicated unit and integration tests.

- Optimized: Written with modern Rego syntax (e.g., import future.keywords.if)

Context-aware policies: No more “one size fits all”

Static templates often fail because they don’t account for the unique landscape of your cluster. Our generator creates context-aware policies by leveraging live data from the MCP server to provide intelligence that generic templates lack:

- Dynamic Namespace Matching: The tool analyzes where violations actually occur. If non-root violations are only found in dev and staging, the generated Constraint is automatically scoped to those specific namespaces.

- Resource Kind Targeting: The tool precisely identifies affected resources. If a violation only impacts StatefulSets, the policy will target that specific apiGroup and kind, preventing unnecessary overhead on other resources.

- Intelligent Exclusions: The system automatically identifies and excludes system-critical namespaces (like kube-system) to ensure security policies don’t accidentally disrupt the cluster’s management layer.

The result: Reliability meets intelligence

By combining the reliability of human expertise with the intelligence of AI, we create a system that is both powerful and trustworthy.

| Feature | From Human Templates | From AI Orchestration |

| Integrity | Correctness and performance optimization | Cluster-specific customization |

| Safety | Edge case handling (empty securityContext, etc.) | Intelligent namespace scoping |

| Utility | Standardized error messages and remediation guidance | Dynamic generation based on actual violations |

Getting started: From zero to your first policy in under 10 minutes

Setting up the Dynamic Kubernetes Policy Generator is designed to be frictionless. By the end of this section, you will have analyzed a live cluster and generated production-ready OPA policies—all without writing a single line of Rego code manually.

Prerequisites: The power tools

Before we dive in, ensure your local environment is equipped with these four essentials:

- Node.js: Required to run the Kubernetes MCP Server. This server acts as the “translator,” turning Claude’s high-level requests into structured, type-safe API calls rather than fragile shell commands.

- kubectl: The tool uses your existing ~/.kube/config. If you can run kubectl cluster-info, the generator can see your cluster.

- LLM-Powered CLI Assistant: An agentic terminal assistant (such as Claude Code) to orchestrate the multi-step workflow, manage file generation, and interface with the Kubernetes MCP server.

Setting up the repository

Clone the toolkit and enter the workspace. The configuration is pre-baked, so there’s very little manual “wiring” required.

$ git clone https://github.com/redhat-et/gatekeeper-policy-generator.git $ cd gatekeeper-policy-generator

What’s inside?

.claude/mcp.json: Automatically tells Claude to boot the Kubernetes MCP server using npx.skills/k8s-best-practices-validator/: Contains the logic, 30+ best practices, and Rego templates.

Your first validation scan: A walkthrough

Follow these steps to move from an empty terminal to a full compliance report.

Step 1: Verify your context

The tool analyzes whichever cluster your current kubectl context points to. Verify it now:

$ kubectl config current-context

Step 2: Launch claude code

Type claude in your terminal. On the first run, it will automatically download the MCP server via npx. You’ll see a confirmation:

Connected to: <your-cluster-name>

Step 3: Invoke the skill

Simply ask Claude to start the process:

> Validate my cluster against Red Hat best practices

Step 4: Discovery & collection

The LLM will now:

- Filter Namespaces: It automatically ignores system namespaces like kube-system to avoid “noise.”

- Collect Resources: It retrieves the full YAML specifications for Pods, Deployments, StatefulSets, and NetworkPolicies.

Step 5: The violation report

The LLM presents a data-driven “moment of truth.” Every violation includes the risk level, the affected resource, and a YAML snippet showing you exactly how to fix it.

Severity Levels:

- Critical: Immediate risk (e.g., unapproved registries). Fix today.

- High: Significant security/stability concerns (e.g., running as root). Fix this week.

- Medium/Low: Operational (e.g., missing labels).

What happened under the hood? (The technical flow)

It’s important to understand that this isn’t just “chatting” with an AI—it’s a multi-phase technical execution:

| Phase | Activity | Behind the Scenes |

| 1. MCP Handshake | Connectivity | The AI powered tool executes npx kubernetes-mcp-server, which authenticates via your kubeconfig. |

| 2. Discovery | Context Building | Assistant lists all namespaces and resources to build an in-memory model of the cluster. |

| 3. Deep Inspection | YAML Parsing | The assistant requests the full resource spec (e.g., securityContext) via MCP to check deep fields. |

| 4. Analysis | Reasoning | The AI orchestrator compares the spec against the 30+ rules in the Skill file. |

| 5. Reporting | Synthesis | Violations are grouped by severity and formatted with remediation guidance. |

Next steps: To enforce or not to enforce?

After presenting its findings, the system prompts for a decision: should it now proceed to generate the ConstraintTemplates and Constraints necessary for active enforcement?

You have three paths:

- Immediate Enforcement: Generate policies in dryrun mode for all high-priority items.

- Targeted Rollout: Generate policies only for specific categories (e.g., “Just Security”).

- Remediation First: Use the report as a “to-do” list to fix the workloads manually before turning on any automated gates.To transform this solution from a powerful prototype into a production-grade infrastructure, we must treat AI-generated policies with the same rigor as any other mission-critical code. This involves moving beyond a single “Analyze-and-Apply” step toward a comprehensive Policy Lifecycle Management system integrated directly into your skills.

Conclusion: The horizon of AI-driven platform engineering

The integration of the Large Language Model, MCP, and OPA Gatekeeper marks a fundamental shift in Kubernetes governance. We are moving away from manual, static policy authorship toward a dynamic, data-driven workflow that transforms systems security from a traditional friction point into a seamless, automated service.

As we look toward the future, the “Policy-as-Code” movement is evolving into “Intent-as-Governance.” In this new paradigm, platform teams define high-level security intentions, and AI agents—equipped with structured tools like MCP—continuously bridge the gap between those intentions and the live cluster state. This approach acts as a powerful force multiplier for enterprise security teams, specifically helping Red Hat Advanced Cluster Security (ACS) and Red Hat Advanced Cluster Management (RHACM) users effectively bridge the gap between detection and enforcement. By leveraging the reasoning capabilities of large language models to rapidly produce preventative OPA policies, teams can transition from manual authorship to a high-speed pipeline—paving the way for:

- Self-Healing Compliance: AI detection of configuration drift followed by automated remediation through generated PRs.

- Contextual Guardrails: Policies that automatically adapt based on whether a workload is in a sandbox, staging, or production environment.

- Shift-Left Education: Developers receiving real-time, AI-generated feedback explaining why their YAML was blocked and how to fix it according to company standards.

Moving to production: A disciplined approach

While the speed of AI-driven generation is impressive, moving from a local demo to an enterprise-grade solution requires a disciplined, multi-layered approach to deliver stability and trust:

- Sandbox Validation: Always validate generated policies in a staging environment to ensure the AI-generated Rego logic does not interfere with critical infrastructure components.

- Automated Evaluation & Unit Testing: Treat policies as mission-critical code. Incorporate Python evaluation scripts for schema integrity and OPA unit tests (opa eval) directly into the pipeline to verify logic against both ‘known-good’ and ‘known-bad’ YAML samples, ensuring nothing gets deployed without verification.

- Mandatory “Dryrun” Period: Every policy must spend one to two weeks in dryrun mode to monitor logs for false positives before being moved to deny mode.

- Human-in-the-Loop GitOps: A human must remain the final authority; all generated policies should be checked into a version-controlled repository for peer review by a security engineer before final deployment via your CI/CD pipeline.

By combining the reliability of human expertise with the intelligence and reasoning capabilities of large language models, platform teams can finally move at the speed of development without compromising on systems security or operational excellence.