AI systems are no longer just single-purpose models. With the rise of agentic AI, software systems designed to carry out complex tasks and solve problems with limited human supervision. It’s a step beyond generative AI, which creates content, to an AI that does planning and memory execution. Agentic AI is capable of invoking tools, calling application programming interfaces (APIs), or even orchestrating other agents. These agents are making access requests, fetching data, and chaining reasoning steps. These agent-to-agent (A2A) and model context protocol (MCP) interactions create sophisticated workflows, but also introduce new trust boundaries.

This is a bonus article building on our three-week deep dive on AI performance and trust. You can see the previous articles here:

Note: Red Hat’s Emerging Technologies blog includes posts that discuss technologies that are under active development in upstream open source communities and at Red Hat. We believe in sharing early and often the things we’re working on, but we want to note that unless otherwise stated the technologies and how-tos shared here aren’t part of supported products, nor promised to be in the future.

The question now becomes: Can we trust these boundaries? Today, the answer is mostly no. Deployed on Red Hat OpenShift/Kubernetes, agents and tools register with the Red Hat build of Keycloak (or another identity provider) and verify using static client_id and client_secret. Tokens are then obtained via client credentials flow (no user context). Agent-to-agent and agent-to-tools communication carries no user identity, and downstream tools sometimes rely on shared API tokens with broad access. Most security breaches in recent years have exploited hidden trust between components, the very gaps agents and MCP systems amplify. By enforcing identity and authorization at every step, zero trust reduces the attack surface and makes lateral movement far harder.

These gaps are what this blog series addresses. We’ll build step by step, showing how to apply zero trust (ZT) design principles to autonomous agent systems, with working code in the redhat-et/zt-autonomous-agent-blog

The problem: Incomplete trust boundaries in AI-native systems

When AI agents interact with services, tools, and other agents, each new hop adds a hidden assumption: that downstream calls can be trusted simply because the upstream call was trusted. In zero trust terms, this is what National Institute of Standards and Technology (NIST) 800-207 calls a transaction boundary problem.

In a three-party interaction — say A (Client), B (Agent Platform), and C (Downstream Tool or Agent)) — authentication normally happens only between A and B, and then separately between B and C. From A’s perspective, however, the real business transaction is not just with B, but with (B+C) acting together. Without zero trust, A implicitly trusts C through B, even though no explicit trust was ever established. Zero trust demands that we remove this implicit link by extending explicit identity, authorization, and attestation across the full transaction path A<->(B+C), not just at each local hop.

Without zero trust applied end-to-end, three patterns emerge:

1. Endpoint protection is surface-deep

Authentication is often enforced only at the entrypoint of the system. Once a token is issued, it is accepted broadly across agent APIs, reasoning APIs, inference APIs, and tool APIs without scope-specific validation.

This makes endpoints indistinguishable from one another in terms of access control. The principle of least privilege collapses when every token grants the same breadth of access.

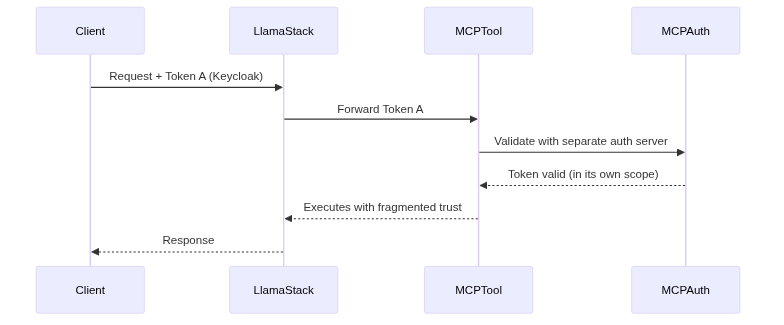

2. The chain of trust breaks across MCP boundaries

Within agent-to-agent or agent-to-tool interactions, tokens are frequently passed through to downstream services, or static API keys are configured as stand-ins. If a downstream agent enforces its own independent authentication model, it effectively fragments the identity chain: the upstream assurance provided by the gateway or orchestrator no longer applies. Without delegated token exchange, trust established at one layer does not propagate securely to the next.

Here’s what this looks like in many current agent deployments:

In this scenario, the client authenticates with the identity provider, but downstream agents or tools rely on separate authentication systems. The trust chain is broken: there’s no single source of truth, no delegated scoping, and no continuous enforcement.

3. Verification without continuity

Even where tokens exist, enforcement is shallow: expiration checks are inconsistent, error signals are opaque, and requests are not bound to attested workloads. This results in identity without assurance, authorization without context, and observability without auditability. Continuous verification and workload attestation are missing, leaving long-lived blind spots in the system.

Zero trust principles for autonomous agent systems

To address these gaps, we extend zero trust principles specifically for agent-to-agent and agent-to-tools environments:

- Identity everywhere + contextual authorization

All APIs must be scoped. Tokens validated at inference endpoints should not automatically apply to models or tools. This helps inference, model, and tool APIs each enforce their own trust boundaries, rather than relying on one broad token. - Delegated token exchange

Instead of forwarding the client token to downstream agents or tools, each call must use a scoped token issued specifically for that hop. This prevents the broken trust chain across MCP boundaries. - Continuous verification + auditability

Expiry and revocation must be checked on every call, not just once at login. Audit trails must record decisions for forensics. This closes the gap of shallow verification. - Workload attestation

Requests are processed only if the downstream agents or tool proves it’s running in a trusted state. This extends zero trust beyond identity into runtime assurance.

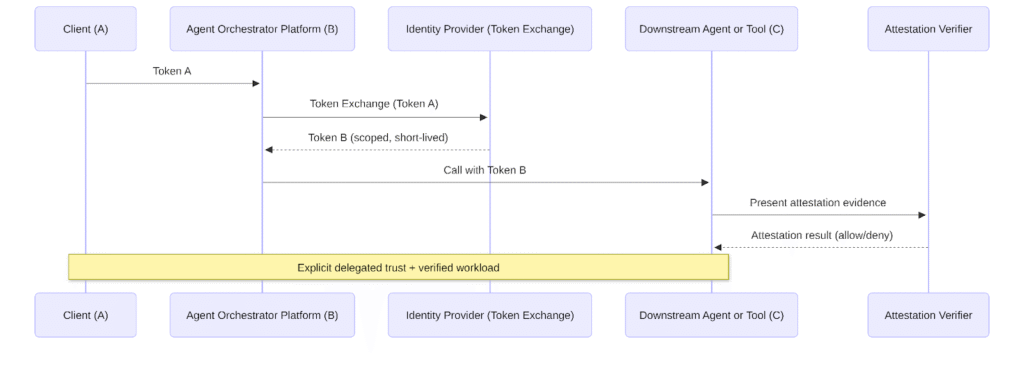

Delegated token exchange

Token exchange is defined in OAuth 2.0 Token Exchange (RFC 8693). In this Zero Trust design, it is used not only for downscoping, but to preserve explicit on-behalf-of semantics.

The client presents Token A (the incoming access token) to Keycloak at the token exchange endpoint.

Along with Token A, it specifies:

- A different audience (e.g., MCP tool)

- Narrower scopes (e.g., tool.read)

- A shorter lifetime

The identity provider (e.g Keycloak) validates Token A and issues Token B that:

- Is derived from Token A

- Preserves the original subject (sub)

- Encodes the exchanging client as the actor (act claim), establishing explicit on-behalf-of semantics

- Is scoped to the new audience and purpose

- Is short-lived by policy

Token B therefore represents:

Client X acting on behalf of User Y for Audience Z with constrained authority.

This helps keep delegation cryptographically preserved and auditable across service boundaries, rather than collapsing into simple impersonation or standalone service identity.

In the delegated flow, each hop receives its own short-lived, scoped credential that cryptographically captures both the caller and the delegating principal. This aligns with least privilege, auditability, and causal traceability.

Bridging the gaps in practice

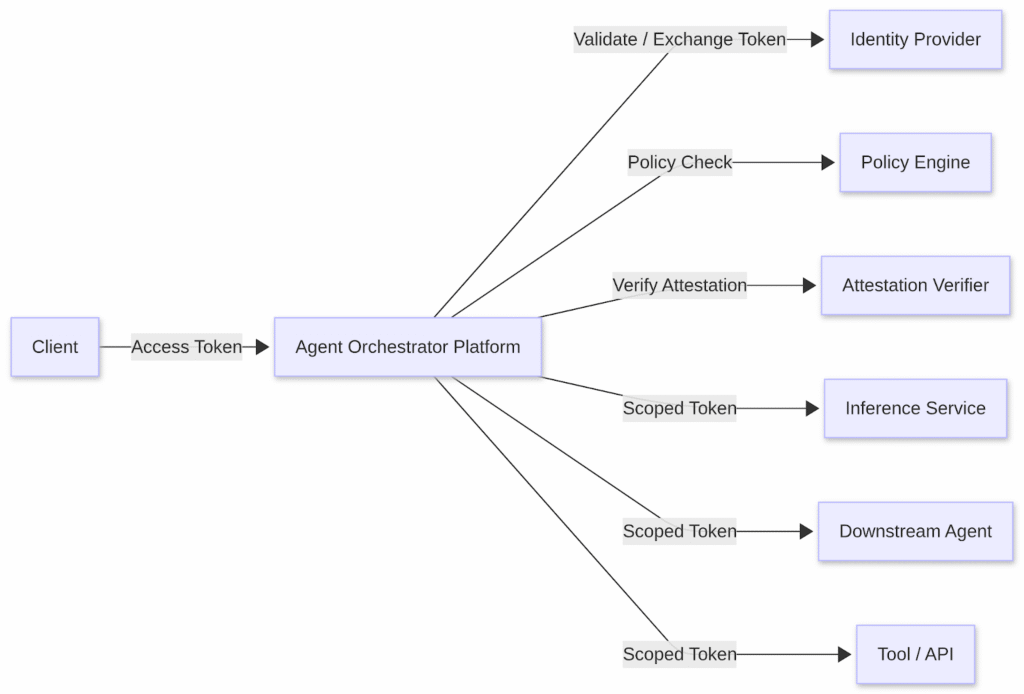

Today, many agent platforms enforce OAuth properly at their own entrypoint. But once an agent calls downstream backends — whether inference services or peer agents or tools— the zero trust chain often breaks. Our goal is to fix that by applying:

- Scoped API enforcement

- Delegated token exchange

- Continuous verification

- Workload attestation

- Agent registration as a source of truth

Zero trust enforcement requires integration with external systems for identity, policy, and attestation.

Closing

Zero trust for AI-native systems means more than authenticating clients at the boundaries. It means extending trust boundaries across every hop, every tool call, and every workload. By enforcing identity everywhere, delegated tokens, continuous verification, and workload attestation, we can make agent ecosystems autonomous and trustworthy.